Material testing sits at the point where engineering assumptions become decisions. Design teams specify requirements, production teams run processes, and QA has to confirm that the material delivered today behaves within the limits the part was designed for. The goal is consistent, comparable property data that supports material selection, process control, and product acceptance.

In the context of metals and plastics, material testing is the standardized measurement of how a material responds to mechanical loads and service conditions. Labs typically focus on properties tied to the most common failure modes: permanent deformation, fracture, and loss of performance over time. That is why most workflows are built around mechanical methods that generate numbers engineers can compare across batches and suppliers.

In this article, we map the main testing families at a high level, explain how destructive and non-destructive approaches fit into a practical testing program, and then go deeper into the core mechanical tests used in many labs: tensile testing on universal testing machines, hardness testing, and impact testing. We also cover where additional methods like fatigue, creep, and fracture toughness become necessary, and finish with a clear framework for choosing the right test based on material type, service risk, and specification requirements.

What Is Material Testing?

Material testing is the controlled measurement of how a material responds to loads and operating conditions, so its properties can be quantified, compared, and checked against specifications. In industry, this work is standardized: methods are defined in ISO and ASTM documents to make results repeatable across labs, operators, and suppliers.

Even when the focus is metals and plastics, “material testing” is broader than one test type. At a high level it includes mechanical testing, thermal-property testing, electrical-property testing, resistance to corrosion or environmental exposure, and non-destructive testing. Each group has established procedures and reporting rules, which is why a test result is only meaningful when the method and conditions are known.

For most QA/QC labs working with metals and plastics, the core workload is mechanical. The aim is straightforward: reduce the risk of fracture and reduce the risk of excessive deformation in service. That’s why labs tend to center on three families of outputs. First are stress–strain properties from tensile testing, such as yield strength, tensile strength, elongation, and reduction of area. Second are hardness numbers from indentation methods like Rockwell, Brinell, or Vickers, plus Shore-type scales for polymers. Third is impact performance, typically reported as absorbed energy in tests like Charpy or Izod, with other variants used depending on material and industry.

Standards also define what, exactly, a lab is calculating and controlling. Tensile standards specify how strength values are derived from measured force and specimen dimensions, and they formalize common ductility metrics like reduction of area. They also treat specimen geometry as a controlled variable. For example, tensile standards include proportional specimen rules and fixed gauge-length conventions so elongation results are comparable from one lab to another.

Finally, test conditions are part of the measurement. Tensile standards specify temperature ranges for “room-temperature” testing and define what counts as controlled conditions. The practical point is simple: if two labs test the same material under different temperatures or different setup conditions, the numbers can drift. Good reports capture the method, specimen type, and test conditions so the result can be interpreted and reproduced.

Why Material Testing Matters

Material testing matters because it turns material performance into controlled, auditable evidence. The key is understanding what each method can and cannot prove. Tensile standards, for example, are explicit that a tension test is performed on a standardized specimen taken from a selected portion of a part, and that the result may not fully represent the strength or ductility of the complete end product or its behavior in service environments. That boundary is useful: tensile data works best as a controlled comparison and an acceptance check, not as a perfect simulation of every real load case. At the same time, those same methods are widely used for acceptance testing of commercial shipments, which is why tensile testing is one of the main tools for supplier risk control and material certification in trade.

Safety and Reliability

Most engineering failures come down to two outcomes: fracture or unacceptable deformation. Material testing reduces that risk by making the relevant properties measurable before a part goes into service. Tensile testing quantifies strength and ductility margins. Hardness testing gives a fast indication of material condition and local variability. Impact testing screens for brittle behavior under rapid loading and notch-driven stress states that can bypass what quasi-static tensile results suggest. Standards for notched-bar impact testing note that, for some materials and temperatures, impact results correlated with service experience have been found to predict brittle fracture likelihood with good accuracy, which is why Charpy and Izod remain common screening tools in safety-critical workflows.

Compliance with Specifications and Standards

In regulated or specification-driven supply chains, “meeting spec” means more than a number on a certificate. It means the number was produced under a defined method, with a defined specimen geometry, test conditions, and reporting rules. ISO and ASTM test methods exist to make results comparable between laboratories. Without that framework, you can get two “valid-looking” results that can’t be reliably compared because the specimen type, conditioning, loading rate, or measurement chain differs.

Quality Control and Batch Stability

Production needs fast signals that a process stayed in control and that incoming material matches what was qualified. Hardness testing is widely used here because it is fast and local, and standards explicitly position Rockwell hardness as a practical QC and material selection tool. At the same time, hardness standards warn that a measurement at one location may not represent the whole part. That matters for components with gradients, such as case-hardened layers, weld heat-affected zones, or additively manufactured parts, where the surface and core can behave differently.

Process Validation (Heat Treatment, Welding, Forming, Composites)

Material testing is also used to validate processes, not just materials. Here, the details of sample preparation are part of the method. Impact testing standards make this point clearly: when evaluating heat-treated material, Charpy specimens should be finish-machined, including the notch, after the final heat treatment unless equivalence is demonstrated. The reason is practical. If you machine the notch before the last heat cycle, you can change the effective toughness you measure, so the default requirement is to machine after the final cycle. This is the same logic QA applies to other processes: the test specimen has to represent the final condition you are approving.

Main Types Of Material Testing

A practical way to group material testing is by the type of question you need to answer and the type of signal you can reliably measure. A widely used top-level frame includes mechanical testing, thermal-property testing, electrical-property testing, resistance to corrosion or environmental degradation, and non-destructive testing. For this article, mechanical testing stays in the foreground, while the other groups are included as short reference points so the picture is complete without drifting away from metals and plastics.

Mechanical methods map directly to how loads show up in service. Tensile and compression tests represent uniaxial loading. Bend and flexural methods represent flexural loading. Hardness tests represent contact loading through indentation. Impact tests represent rapid impulses. Fatigue represents cyclic loading. Creep represents sustained load at temperature over time. This is also how most labs naturally organize their workflows.

Key Testing Families In Practice

- Mechanical Testing: tension, compression, bending, shear, hardness, impact, fatigue, creep, wear.

- Physical Properties: density, dimensional stability with temperature, thermal expansion.

- Environmental And Durability Testing: controlled exposure followed by re-measurement, for example heat, moisture, chemicals, salt fog.

- Non-Destructive Testing: inspection methods designed to detect defects or discontinuities without damaging the part.

Destructive Vs. Non-Destructive Testing

Destructive testing is used when the required result is a property number that typically demands permanent deformation or fracture. That includes yield and tensile strength, elongation and reduction of area, hardness values from controlled indentation, and absorbed energy from impact testing. The sample is consumed by design because the failure event is part of the measurement.

Non-destructive testing is used when the goal is defect detection or condition assessment without harming the component, especially when parts are expensive, limited in quantity, or already in service. In practical terms, NDT is often described by what each method can “see.” Ultrasonic pulse-echo methods introduce sound and interpret returning reflections from internal geometry or imperfections. Magnetic particle testing magnetizes ferromagnetic materials and uses particles to reveal surface or near-surface discontinuities. In NDT-heavy industries, the method is also the people and procedure, not just the instrument. Personnel qualification and certification are part of how NDT quality is maintained.

In real lab programs, DT and NDT are typically combined, not chosen as alternatives. DT establishes baseline mechanical performance and acceptance limits on representative specimens or witness coupons. NDT then extends control to every part or to in-service assets by screening for discontinuities, verifying process consistency, and supporting inspection plans where destructive sampling is impractical.

Core Mechanical Tests Used In Material Testing Labs

Most material testing labs standardize around a small set of mechanical methods because they cover the highest share of engineering questions with the lowest ambiguity. These tests produce outputs that are widely specified on drawings, material certificates, and customer requirements. They also scale well: you can run them as acceptance checks in production or as controlled comparisons in development, as long as specimen preparation, test conditions, and verification are disciplined.

Tensile Testing

Tensile testing is the baseline method for quantifying strength and ductility. In practice, labs use it to report yield behavior, ultimate tensile strength, elongation, and reduction of area. Those outputs are defined in standards language so two labs can measure the same property and mean the same thing.

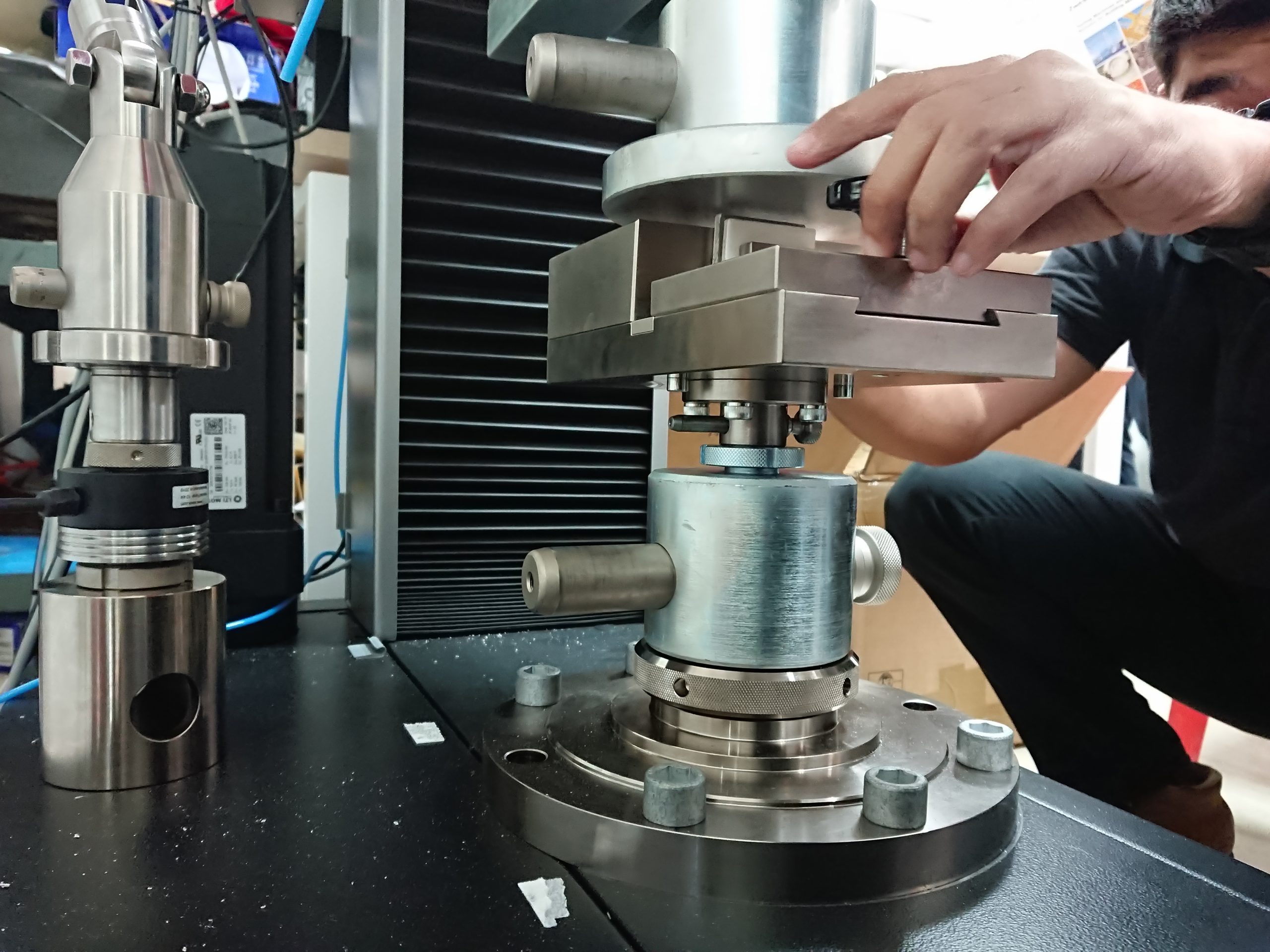

A universal testing machine is used for more than simple tension. With the right fixtures, the same frame commonly covers tension, compression, and bend or flexural configurations. That makes tensile systems the main workhorse for metals, plastics, and many composites programs.

What drives result quality is rarely the load cell alone. The highest-impact variables are:

- Specimen Geometry And Preparation: gauge length conventions, machining quality, surface condition, and how representative the sampling is.

- Rate Control: standards distinguish between controlling by strain rate versus stress rate. In most practical setups, controlling strain rate reduces variation when materials are rate-sensitive.

- Strain Measurement: extensometry vs. crosshead displacement is a decision that directly affects strain-based outputs.

- Alignment And Gripping: axial loading depends on the specimen axis matching the machine center line. Misalignment introduces bending components that are not part of the intended stress calculation and can distort reported values.

- Environment: temperature and conditioning influence results, especially for polymers and for temperature-sensitive metal behavior.

Where it’s used: incoming verification of mill products, process qualification, lot-to-lot comparisons, and as a reference test when hardness or impact data needs to be tied back to strength and ductility.

Hardness Testing

Hardness testing measures resistance to indentation under a defined indenter and force cycle. It’s popular in QC because it is fast, local, and often possible directly on a finished part or a sectioned coupon. The correct way to treat hardness is as an empirical measurement that is highly useful within a controlled method, not as a universal substitute for tensile or fatigue data.

Where hardness is the best quick control:

- Heat Treatment Verification: confirming that a thermal cycle produced the intended material condition.

- Incoming Inspection: screening supplier lots quickly before deeper testing.

- In-Process Checks: monitoring drift across a production run, especially when destructive sampling is limited.

Method selection is usually driven by material type, section thickness, and microstructure:

- Rockwell: depth-based, fast, common in production. Superficial scales exist for thinner sections and surface layers.

- Brinell: indentation diameter-based. Often used on castings, forgings, and materials with coarser or more heterogeneous structures.

- Vickers/Knoop: diamond pyramid micro and macro hardness for thin layers, coatings, case depth profiles, and small features where localized measurement matters.

- Shore/IRHD: common QC language for plastics and elastomers. Useful for control and comparisons when the method is held constant.

Standardized procedure and verification are not optional in hardness work because the number is sensitive to setup details. Indenter condition, force accuracy, dwell timing, surface preparation, and instrument verification all affect comparability. A single lab can get stable trends with minimal effort, but comparing hardness values between labs requires traceable verification practices and consistent technique, especially when testing gradients such as case-hardened layers, weld zones, or layered polymer products.

Impact Testing

Impact testing is used when failure risk is governed by rapid loading and notch sensitivity. It is one of the few standardized lab methods designed to screen how a material behaves when the loading rate is high and the stress state is intensified by a notch. That makes it practical for comparing heats, validating heat treatment, and assessing performance at low temperatures where brittle behavior becomes a concern.

Charpy and Izod are similar in purpose but different in configuration. In standards terminology, Charpy is a simple-beam notched-bar configuration, while Izod is a cantilever-beam configuration. In both cases, the primary reported output is absorbed energy. Some programs also evaluate behavior across temperatures to understand transition characteristics rather than relying on a single-point result.

Impact testing is also sensitive to controls that are easy to underestimate:

- Specimen Geometry: notch type and notch quality materially influence results.

- Temperature Conditioning And Transfer Time: if testing at non-ambient temperatures, specimen handling and timing become part of the method.

- Machine Losses And Condition: friction losses, striker condition, and general machine health affect the absorbed energy scale.

- Capacity Selection: operating too close to the machine’s energy limit reduces confidence in the reported value.

Because absorbed energy is derived from the machine’s energy scale, verification and calibration are central to comparability. Programs that treat impact testing as a serious screening tool use periodic verification with certified specimens and documented checks of machine performance. Without that, labs can generate neat-looking numbers that do not travel well across sites or across time.

Additional Mechanical Tests

Tensile, hardness, and standard impact tests cover a large share of QC needs, but they don’t represent every service condition. When the governing risk involves compressive instability, bending-driven cracking, laminate shear response, cyclic damage, long-term deformation at temperature, or crack tolerance, labs add targeted mechanical methods. The goal is not to build a bigger test menu. The goal is to match the test to the dominant failure mode and report the parameters that engineering decisions actually depend on.

Compression Testing

Compression testing is used when parts operate primarily in compression or when geometry introduces buckling constraints. For metallic materials, standards define axial-load compression procedures at room temperature. In practice, alignment and anti-buckling fixtures can be as important as frame capacity, because a specimen that buckles early stops being a compression test and turns into a stability problem.

Bend And Flexural Testing

Bend testing is often used as a fast ductility screen and as a processing or weld quality indicator. The intent is simple: evaluate whether a material or a processed region can undergo a continuous bend without cracking or developing surface irregularities.

For plastics and composites, flexural testing is commonly run as three-point bending on a universal testing machine. It’s a convenient way to characterize flexural strength and stiffness on beam-type specimens, and it is often specified when parts see bending loads in service.

Shear Testing

Shear testing becomes central in composites because in-plane shear properties can govern laminate behavior even when tensile strength looks acceptable. Composite shear methods are typically reported as a shear stress–strain response plus derived values such as ultimate shear strength and shear modulus-type measures, which makes them directly usable for material models and allowables.

Fatigue And Crack Growth

Fatigue testing is used when damage accumulates under repeated cycles rather than a single overload. In a typical force-controlled axial fatigue setup for metals, the focus is establishing fatigue behavior under constant-amplitude loading in air at room temperature, using notched or unnotched axial specimens depending on the objective.

When the design philosophy assumes flaws are present and controlled growth matters, crack growth testing reports how fast a crack advances per load cycle as a function of the applied driving force range. That output is fundamentally different from “fatigue strength” and is used for damage-tolerance assessments.

Creep And Stress Rupture

Creep testing is used when the governing risk is time under load at elevated temperature. Standards distinguish between creep tests that quantify deformation under constant load and temperature, and rupture or stress-rupture tests that quantify time to rupture under the same controlled conditions. Together, they define load-carrying capability at temperature for applications where “holds for years” matters more than short-term strength.

Fracture Toughness

Fracture toughness testing is used when crack-like defects are assumed present and a geometry- and constraint-aware toughness value is required, rather than a pendulum energy. Linear-elastic fracture toughness methods for metals use fatigue-precracked specimens and report toughness in units of stress times the square root of length. This makes the result directly compatible with fracture mechanics calculations and is one of the reasons it’s used when impact energy is not a sufficient basis for design decisions.

Wear Testing

When performance is limited by friction and material loss rather than bulk strength, wear testing is used. A common approach is pin-on-disk under controlled sliding conditions, where wear behavior is measured and coefficient of friction can also be recorded.

Standards And Test Repeatability

Standards do two jobs at once. First, they define the method so everyone runs the same test, not a personal interpretation of it. Second, they define the controls that make results comparable across time, operators, machines, and laboratories. Without a standard, two numbers that look identical on paper may come from different specimen types, different loading rates, different conditioning, and different verification practices. In that situation the numbers are not truly comparable, even if both tests were performed “correctly” by local rules.

Standards do two jobs at once. First, they define the method so everyone runs the same test, not a personal interpretation of it. Second, they define the controls that make results comparable across time, operators, machines, and laboratories. Without a standard, two numbers that look identical on paper may come from different specimen types, different loading rates, different conditioning, and different verification practices. In that situation the numbers are not truly comparable, even if both tests were performed “correctly” by local rules.

A key point is that major test standards are not isolated documents. They connect the test method to metrology. Tensile method standards, for example, explicitly reference separate calibration standards for the force-measuring system and for extensometer calibration. In other words, the method assumes the force and strain measurement chain has been verified to defined accuracy classes, not just “checked once.” The same logic repeats across other tests. Impact standards treat machine verification, friction losses, specimen geometry, and temperature handling as part of the measurement. Hardness standards require verification with traceable test blocks rather than relying on an instrument display alone. For polymers, Shore-type hardness standards include specific procedural controls that directly target reproducibility, including defined reading times and recommended use of a stand or applied mass.

Main Sources Of Variation In Lab Results

Before the checklist, one practical point: most scatter comes from small changes in how the test is set up and controlled. If those variables drift between runs or labs, the numbers can still look “valid” but won’t be truly comparable. Here are the main sources of variation to watch.

- Specimen Selection And Geometry: where the specimen is taken from, geometry conventions, machining quality, notch quality, surface condition.

- Preparation Route: sequence matters, especially when heat treatment or other process steps can change microstructure after machining.

- Test Environment And Conditioning: temperature windows, conditioning tolerances, and transfer times for temperature-controlled impact work.

- Rate Control: strain-rate or stress-rate choices affect strain-rate-sensitive materials and can shift reported yield and ductility values.

- Strain Measurement Method: verified extensometry versus indirect displacement methods, especially when elongation is part of acceptance.

- Operator Technique: setup consistency, alignment checks, indentation placement, reading timing for durometers, and handling discipline.

- Equipment Condition And Verification: calibration status of force systems, extensometer performance class, hardness block traceability, impact machine verification and friction checks.

What To Record In A Test Report

If someone else needs to reproduce the number, they need these inputs. Keep the report anchored to that rule.

- Standard And Edition used for the test method.

- Material Identification: grade, heat or batch, and any relevant processing condition.

- Specimen Details: type, dimensions, orientation if relevant, and preparation route (machining, finishing, notching sequence).

- Test Setup: fixture or configuration (tension, compression, bend, impact configuration), grip type where relevant.

- Test Conditions: temperature, conditioning method and duration, and any timing requirements that apply.

- Test Rate And Control Mode: what was controlled and how (for example, strain-based control versus stress-based control in tensile work).

- Measurement Chain Status: force calibration or verification reference, extensometer verification where used, hardness block traceability, impact machine verification checks.

- Reported Outputs And Units plus any notes required by the standard when results are outside recommended operating windows.

How To Choose The Right Material Test

Test selection is a controlled decision. QA teams and material testing labs choose methods based on what the product is expected to endure, what the specification requires, and what can be measured repeatably with available sampling and equipment. The logic is consistent across industries: define the risk, match it to a standardized output, then lock the conditions so results stay comparable over time.

Practical Filters Labs Use

Before a lab commits to a method, it typically runs through a short set of filters. These are the variables that determine whether a test will be meaningful for the product and defensible in reporting.

- Service Risk And Loading Mode: what actually drives failure in the field. Static overload and permanent deformation, sudden impacts, local contact damage, cyclic loading, or long-term load at temperature all point to different methods.

- Material Family And Product Geometry: metal, polymer, or composite behaves differently under rate and temperature. Geometry controls whether you can extract representative specimens, whether buckling is a risk in compression, and whether local gradients matter.

- Standard Or Customer Specification: many programs are method-driven from the start. The standard defines specimen type, conditioning, loading or deformation control, and what must be reported.

- Testing Purpose And Volume: production QC screening, qualification, supplier validation, and R&D comparisons have different sampling plans, throughput needs, and tolerance for test time.

- Reporting And Traceability Requirements: if the result must survive an audit or support supplier acceptance, the lab needs a method with clear documentation rules and a verified measurement chain.

Goal, Recommended Test, Typical Output

Once the filters are clear, labs usually map the goal to the simplest method that produces the required output under a recognized standard.

- Static overload or permanent deformation risk: tensile testing, reported as yield behavior, tensile strength, and ductility metrics.

- Rapid QC confirmation of material condition: hardness testing, reported as Rockwell, Brinell, Vickers, or Shore-type values depending on material and thickness.

- Brittle fracture risk under notched, rapid loading: impact testing (Charpy or Izod), reported as absorbed energy under defined notch geometry and temperature conditions.

- Specimens hard to extract or geometry-driven behavior: compression or bend-based methods, reported as compressive response or bend ductility indicators.

- Failure driven by cycles rather than one overload: fatigue or crack-growth testing, reported as fatigue behavior under controlled cycling or crack growth trends.

- Time-at-temperature governs deformation or rupture: creep or stress-rupture testing, reported as deformation over time and time-to-rupture under constant conditions.

Looking For Material Testing Equipment?

If you’re building a material testing lab, upgrading an existing setup, or filling a specific gap in your equipment lineup, you’re in the right place. NextGen Material Testing supplies testing equipment across multiple material categories and test types, and we support customers through selection, setup, and long-term use.

- Metal Testing Equipment: hardness testing systems, universal testing machines (tensile, compression, bend/flex), impact testers, and related lab solutions

- Plastic Testing Equipment: tensile and impact testing systems, plus hardness testing solutions used in polymer QC

- Rubber Testing Equipment: Shore and IRHD-type hardness testing workflows, plus common mechanical testing setups for rubber QA

- Cement & Concrete Testing Equipment: equipment for cement and concrete testing workflows

- Soil Mechanics Testing Equipment: equipment for geotechnical and soil testing workflows

- Rock Mechanics Testing Equipment: equipment for rock mechanics testing workflows

If you already know what you need, request a quote and we’ll help you move quickly. If you’re not sure which standard, configuration, or capacity matches your materials and test volume, contact our team for a short consultation and we’ll recommend a practical setup for your lab.

Turning Test Results Into Decisions

Material testing only earns its place in a lab when the results stay comparable and actionable. For metals and plastics, that means using standardized methods to measure the few properties that most often separate “acceptable” from “risky”: strength and ductility under static load, hardness as a fast indicator of material condition, and impact behavior when rapid, notch-sensitive fracture is a concern.

A solid testing program starts with that mechanical core and expands only when the service risk demands it. If geometry or loading makes tension specimens unrepresentative, labs add compression or bending screens. If failure is cycle-driven, fatigue and crack-growth data becomes the correct language. If temperature and time govern performance, creep and stress-rupture testing replaces short-term strength checks. If crack tolerance is the design assumption, fracture toughness is the defensible metric.

The common denominator is control and documentation. Specimen preparation, conditioning, loading rate, measurement technique, and verification practices determine whether numbers can be compared across batches, suppliers, and years. When those inputs are locked and reported, material testing becomes a repeatable decision tool rather than a one-off measurement.